AI Content Detection: How It Works and Why It Fails

How AI content detectors actually work, why accuracy is limited, and what marketers and publishers should do about AI-generated content in 2026.

AI content detection is the practice of using classifiers to estimate the probability that a given piece of text was generated by a large language model. Detectors produce a probability score, not a verdict, and current tools are accurate enough to be useful for screening but not accurate enough to be used as evidence of cheating or policy violation.

This guide explains how detectors work, why they fail in predictable ways, which tools are worth using in 2026, and how publishers and marketers should think about AI-generated content under Google's guidelines.

How AI Content Detectors Actually Work

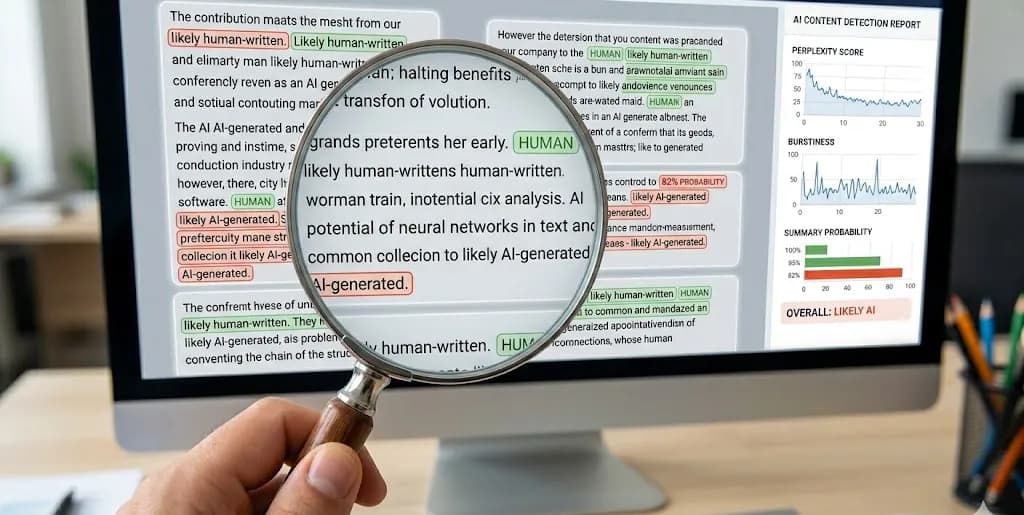

Detectors are supervised classifiers. They take text as input and output a score — typically between 0 (certainly human) and 1 (certainly AI). The underlying models use several signals:

1. Perplexity

Perplexity measures how "surprised" a language model is by the text. Human writing tends to have irregular word choices, typos, and idiosyncratic phrasing, which produce high perplexity. AI writing tends to pick the most probable next token at each step, producing uniformly low perplexity.

This is the oldest and most common signal. It is also the easiest to defeat — adding typos, unusual synonyms, or personal anecdotes increases perplexity enough to flip most classifiers to "human."

2. Burstiness

Burstiness measures sentence-length variance. Humans alternate between long complex sentences and short punchy ones. Early LLM outputs produced eerily consistent sentence length. Modern models (GPT-4+, Claude 3+, Gemini 1.5+) have mostly closed this gap, but burstiness remains a secondary signal.

3. N-gram Distribution and Token-Level Features

Classifiers trained on large corpora of human and AI text learn statistical fingerprints — common phrases, transition patterns, specific word frequencies. The phrase "in today's fast-paced world" appears in AI output at roughly 3-5x the rate of comparable human writing. Classifiers pick up on thousands of such signals.

4. Embedding-Based Classifiers

The most recent detectors use transformer-based embeddings rather than hand-crafted features. They compare the embedding of the input text to known distributions of AI-generated and human-written text. These are more accurate on long text but still vulnerable to paraphrasing and hybrid-authored content.

5. Watermarking (Mostly Theoretical)

Proposed watermarking schemes embed a subtle statistical signal into AI-generated text — shifting token probabilities in a way invisible to humans but detectable by the model vendor. As of 2026, no major production LLM ships with persistent watermarking, despite OpenAI and Google both demonstrating prototypes. Watermarks are also trivially destroyed by paraphrasing.

Why Detection Accuracy Is Inherently Limited

Four structural reasons detectors will never reach 99%+ accuracy across all conditions:

-

AI writing improves faster than detectors. Each new model generation produces text closer to the distribution of human writing. Detectors trained on GPT-3.5 output misfire on GPT-4 or Claude 3.5. Detector vendors retrain quarterly, but they are always behind.

-

Hybrid authorship is the norm, not the exception. Most real-world content is neither "purely human" nor "purely AI." A human writes a draft, an AI edits, a human revises, an AI polishes. Detectors score this as ambiguous — because it is ambiguous.

-

Short text is undetectable. Below roughly 250 words, signals are too sparse for any classifier to exceed chance-level accuracy. Social posts, product descriptions, and most email copy are below this threshold.

-

Adversarial paraphrasing defeats every detector. Running AI text through a second LLM with a "rewrite this more naturally" prompt reduces detector confidence to near-zero in most tools. This is a one-click workflow.

Published independent research (Weber-Wulff et al., 2023; Sadasivan et al., 2024) consistently finds real-world accuracy of 60-80% on pure AI text and drops to 50-60% on mixed or paraphrased content — effectively coin-flip territory.

The Major AI Content Detection Tools in 2026

| Tool | Strengths | Weaknesses | Typical Use Case |

|---|---|---|---|

| Originality.ai | Fast bulk scanning, team features, plagiarism + AI combined | Overconfident on short text, high false positives on formal business writing | Content agencies screening incoming submissions |

| GPTZero | Free tier, classroom-friendly UX, per-sentence highlighting | Lower accuracy than paid competitors, frequent false positives on non-native-English writing | Education, quick spot-checks |

| Copyleaks AI Detector | High enterprise integration, SOC 2 compliance | Slow on large batches, opaque scoring | Enterprise compliance workflows |

| Turnitin AI Writing Detection | Built into academic workflows | Only available to Turnitin institutional subscribers | Academic integrity reviews |

| Winston AI | Readable reports, dashboard | Smaller training corpus than leaders | SMB content marketing |

| Scribbr AI Detector | Free, decent accuracy on English | English-only reliable, limited features | Students, freelancers |

No tool on this list should be trusted as a sole source of truth. Treat scores as one signal among many.

False Positives: The Real Risk

False positives — human text flagged as AI — are not just annoying. They have ended careers, gotten students expelled, and killed freelance relationships. Patterns that commonly trigger false positives:

- Non-native English writing — regular sentence structures, limited vocabulary variance, and some grammatical patterns mirror AI output signals.

- Technical or legal writing — by nature low-perplexity, formal, structured. Many detectors flag style guides, terms of service, and API documentation as AI-generated.

- Writing by careful, structured thinkers — well-organized, edited, consistent-style writing scores higher than stream-of-consciousness writing.

- Content written with grammar assistants (Grammarly, Hemingway) — these tools smooth text toward LLM-like patterns.

- Academic writing — tightly edited, formal, full of topic sentences. Multiple peer-reviewed studies have documented systematic bias against academic writing.

Published 2023 research from Stanford showed GPTZero misclassified 61% of TOEFL essays written by non-native English speakers as AI-generated — a devastating bias that has real consequences in academic settings.

What Google Actually Says About AI Content

Google's position, as of the latest Search Central guidance, is that AI-generated content is not inherently penalized. What is penalized is unhelpful, thin, or spammy content regardless of how it was produced.

Key points from Google's guidance:

- "Our focus is on the quality of content, not how it is produced."

- "Using AI doesn't give content any special gains. It's just content. If it's useful, helpful, original, and satisfies E-E-A-T, it may do well."

- "Using automation, including AI, to generate content with the primary purpose of manipulating search rankings is a violation of our spam policies."

The practical translation: AI-assisted content that passes human editorial review, offers genuine value, and includes E-E-A-T signals is fine. Bulk-published AI output with no editorial layer is spam.

Google itself does not use public AI detectors. Its quality signals are based on user engagement, link patterns, content uniqueness, and site-level trust signals. A detector giving your content an "87% AI" score has no bearing on how Google ranks that content.

Practical Recommendations for Publishers

For Marketing Teams

- Use AI for drafting, not publishing. Human editorial review is non-negotiable. The editor adds the specific examples, domain expertise, and opinions that differentiate content.

- Measure what matters: engagement, conversion, content decay, and citations — not detector scores.

- Disclose AI assistance in author bios or editorial policy when meaningful. Transparency is a trust signal for both readers and algorithms.

- Run detectors as a quality flag, not a gate. High-AI-probability scores on your drafts often correlate with generic, low-differentiation content. Use them as a prompt to add specifics, not as a pass/fail check.

For Content Agencies

- Don't use detectors as contractual compliance proof. False positives will cost you clients and writers.

- Contract on outcomes: originality, factual accuracy, SEO performance, conversion — not AI probability scores.

- Establish editorial workflows that make hybrid AI-human writing the explicit norm, with defined review gates.

For Educators

- Detector scores are not evidence. The academic consensus is that no current detector meets the evidentiary bar for accusations of misconduct.

- Redesign assessments to reduce the incentive for pure AI submission: in-class writing, iterative drafts with source comments, oral defenses.

- Teach prompt literacy — students will use AI; the skill to develop is critical evaluation of AI output.

For SEO-Focused Content

- Focus on LLM-citability, not LLM-avoidance. The strategic question in 2026 is how to be cited by AI search tools, not how to avoid being detected by AI classifiers.

- Quotable definitions, named frameworks, proprietary data, and identifiable expertise are the content patterns that survive both Google quality review and AI-search citation.

The Future of AI Content Detection

Several trends will reshape the landscape by 2027:

- Detection will move to behavioral signals. Static text analysis is a losing battle. Future detection will combine authorship verification (keystroke dynamics, revision history, biometric writing profiles) with text analysis.

- Provenance frameworks will matter more than detectors. C2PA (Coalition for Content Provenance and Authenticity) and similar standards aim to cryptographically sign content at creation. Adoption is slow but meaningful in journalism and stock imagery.

- Regulation will focus on disclosure, not detection. The EU AI Act and various state-level US laws require disclosure when AI is used in consumer-facing contexts, rather than attempting post-hoc detection.

- The distinction will soften. By 2027, most professional writing will be meaningfully AI-assisted. The question "was this written by AI?" will be analogous to "was this written with spellcheck?" — technically answerable but increasingly irrelevant.

Frequently Asked Questions (FAQ)

Q1: Can Google tell if my content is AI-generated?

Google has detection capabilities but does not use them to directly penalize AI content. It penalizes low-quality content regardless of origin. AI-assisted content that passes human editorial review and provides genuine value ranks normally.

Q2: Which AI content detector is the most accurate?

Originality.ai and Copyleaks tend to score highest in independent benchmarks on long-form pure-AI text. No tool is reliably accurate on mixed or paraphrased content, and all detectors produce meaningful false-positive rates on formal writing by humans.

Q3: What happens if my human-written content is flagged as AI?

Treat the score as one imperfect signal. If it matters (academic context, client-facing work), preserve evidence of your writing process — drafts, revision history, research notes. Consider running the text through a second detector; disagreement between detectors is common and revealing.

Q4: Should I paraphrase AI output to avoid detection?

If your goal is to avoid detection rather than to produce genuinely valuable content, you are optimizing for the wrong outcome. The sustainable strategy is producing content that is useful enough that detection is irrelevant — content with original data, domain expertise, or unique perspective that AI cannot easily replicate.

References

- Google Search Central: Helpful content updates

- Google: Our approach to AI-generated content

- Stanford HAI: GPT detectors biased against non-native English writers (Liang et al., 2023)

- Weber-Wulff et al.: Testing of detection tools for AI-generated text (2023)